Global Health

Digital innovation continues to rapidly transform healthcare while, at the same time, raising several policy challenges, including privacy, security, data sharing and interoperability, transparency, safety and effectiveness, and equity. These challenges are global, requiring multistakeholder collaboration. The pandemic has increased the demand for digital health and AI-enabled tools and, correspondingly, the urgency in developing policy solutions.

In the coming year, we expect to see continued emphasis by health sector stakeholders worldwide on addressing these challenges, with the goal of safely, effectively, and equitably scaling health digitization.

Virtual care will be increasingly important in healthcare delivery

Worldwide innovation in virtual care, including remote patient monitoring tools and telehealth platforms, will continue to augment patient care for an expanding spectrum of clinical applications.

The pandemic has sharply increased this trend. In some countries, including the United States, policymakers have made temporary or permanent changes to regulations that impact the use of such tools in order to expand access. These changes have been supported by efforts to expand the enabling infrastructure for adoption.

Post-pandemic, policymakers are likely to focus on evolving existing laws and regulations to support continued use and adoption by providers and patients. In some countries, this will involve developing a new legal structure to support use of digital technologies, creating opportunities to leverage best practices and improve global regulatory harmonization. We see virtual care being a critical tool for expanded access to care in remote areas worldwide.

More rules will be applied to data usage

Given the importance of health data to patient care, governments and businesses will continue to work to determine how to simultaneously improve access to and protect health data.

In many countries, patients have a right to access their health data. In the United States, this long-standing patient right has been strengthened by recent interoperability regulations, which strive to make it easier for patients to access their health data electronically and for providers to access and use such data to optimize patient care. The expansion of individual data rights in many countries, along with greater portability of health data, will drive new waves of innovation that make use of that data. This will likely prompt regulators to increasingly scrutinize the use of health data in consumer applications.

Meanwhile, there is increased attention to the value of using real-world data (RWD) to support regulatory approvals. RWD is often leveraged in U.S. regulatory approval processes, and other governments, including in the Asia-Pacific region, have begun to explore RWD policies.

The EU’s General Data Protection Regulation (GDPR) has formed a basis upon which many governments have developed their own privacy rules. In the U.S., most new privacy laws carve out health data subject to HIPAA, but also may cover health data in mobile apps that are not covered by HIPAA. Policymakers are likely to focus on striking a balance between access to data and privacy and security protection for the same data.

AI guidance will come into sharper focus

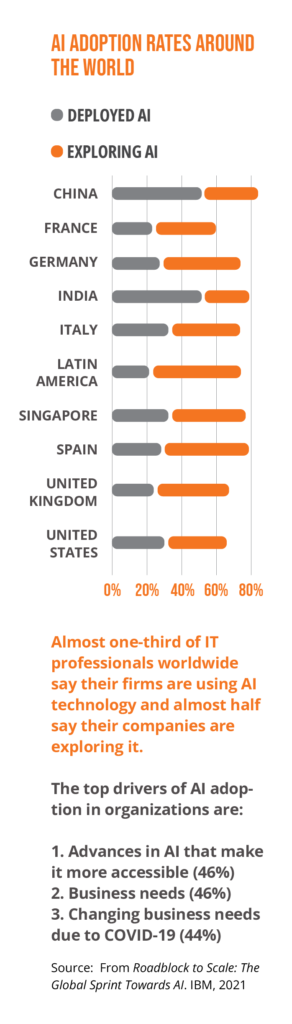

Many stakeholders have developed high-level ethical principles for AI systems. Many such initiatives center on accountability, bias, and transparency. This “soft law” approach is likely to be “hardened” as new regulations are implemented.

For example, the European Commission’s proposed Artificial Intelligence Act (EU AI Act), which takes a risk-based approach, would have broad implications for AI-enabled health tools, especially if they are considered “high-risk.” Since the Act is novel, it may enjoy a “first-mover effect” akin to GDPR, in which other governments pass similar regulations.

Key activities are occurring elsewhere in the world. Effective March 1, China has implemented rules governing algorithms with key functions in the digital economy, including those that set prices and recommend and filter content. Singapore’s Infocomm Media Development Authority’s Model AI Governance Framework—the first of its kind in Asia—provides actionable guidance to businesses on AI ethics and governance issues across sectors, with the goal of promoting understanding and trust.

Governments have also begun to issue national strategies to develop their domestic AI industries, which often include financial commitments, such as recent investments in Chile and India. These developments provide potential opportunities for industry to partner with governments as they develop their economies’ AI capabilities.

Finally, strategies focused on AI and healthcare are likely to receive further attention. For example, collaboration between regulators in the U.S., UK, and Canada on the guiding principles for the use of AI and machine learning in medical devices is a key example of ongoing harmonization efforts. Potential future implementation activities relating to the GMLP principles will be important for stakeholders to monitor.